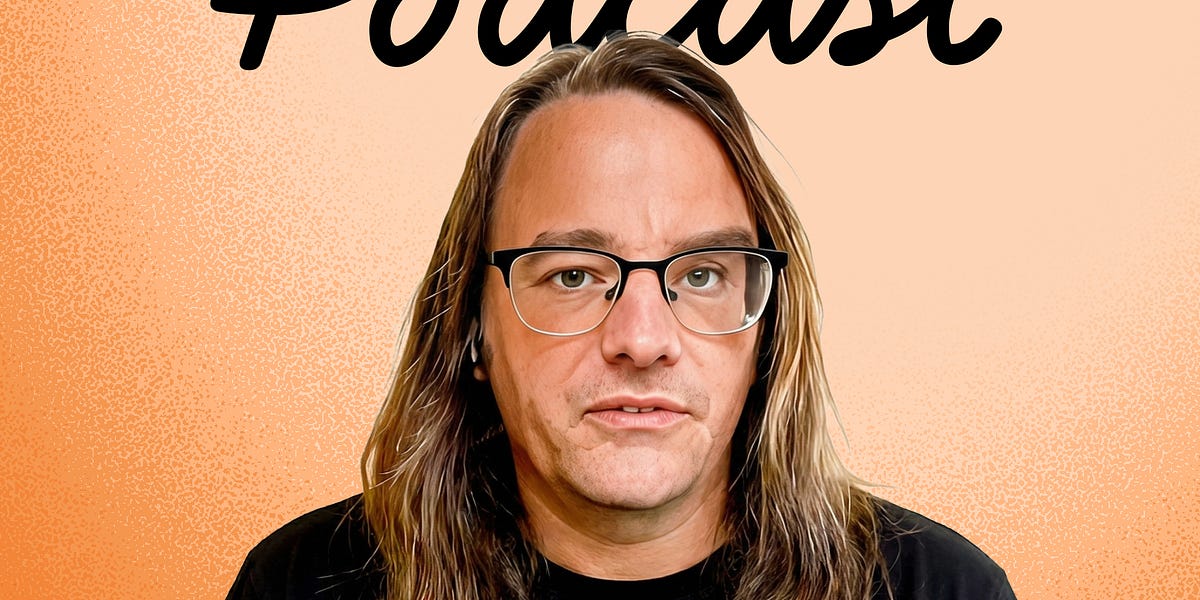

This article discusses an interview with Simon Willison, a well-known expert in AI and developer of various tools related to it. The conversation covers several topics, including the current state of LLMs (Large Language Models), their potential applications, and the challenges associated with them.

Some key takeaways from the conversation include:

- LLMs are rapidly advancing: Simon notes that the past six months have seen significant progress in LLM development, with models becoming more powerful and capable.

- AI security is a growing concern: The conversation highlights the potential risks associated with LLMs, including prompt injection attacks, which can be exploited to manipulate AI systems.

- The "lethal trifecta" of AI agents: Simon introduces the concept of the "lethal trifecta," which refers to the combination of private data, untrusted content, and external communication that can lead to catastrophic consequences for AI agents.

Overall, this conversation provides valuable insights into the current state of LLMs and their potential applications. It also highlights the need for continued research and development in the field of AI security.

Key points:

- Rapid progress in LLM development

- Growing concern over AI security risks

- The "lethal trifecta" concept: private data, untrusted content, and external communication

- Need for continued research and development in AI security

Sources mentioned:

- Simon Willison's blog posts on LLMs and AI security

- Research papers on LLMs and AI security

- Other relevant articles and resources